Postgres is probably the right answer. For most applications, most of the time, a well-indexed relational database will take you further than you think and cause fewer problems than the alternatives.

This post is not about those applications.

This post is about the specific moment when your JOIN chain is five levels deep, your query planner is sweating, and you realize the data you're modeling is not a table — it's a network. And you've been forcing a network into a spreadsheet this whole time.

The Problem With Modeling Relationships Relationally

Relational databases are excellent at storing entities. They're awkward at storing relationships between entities — especially when those relationships are the point.

Consider a simple example: fraud detection in financial transactions.

You want to answer questions like:

Which accounts have transacted with this flagged account, directly or indirectly?

Are there any shared devices or IP addresses connecting these two users?

What's the shortest path between this vendor and a known fraudulent entity?

In Postgres, answering "indirectly connected accounts up to 3 hops away" looks something like:

WITH RECURSIVE connected AS ( SELECT to_account FROM transactions WHERE from_account = 'A' UNION SELECT t.to_account FROM transactions t INNER JOIN connected c ON t.from_account = c.to_account ) SELECT * FROM connected;

This works. It also gets exponentially slower as depth and data volume increase — because relational databases weren't designed to traverse relationships. They were designed to filter rows.

What a Graph Database Actually Does Differently

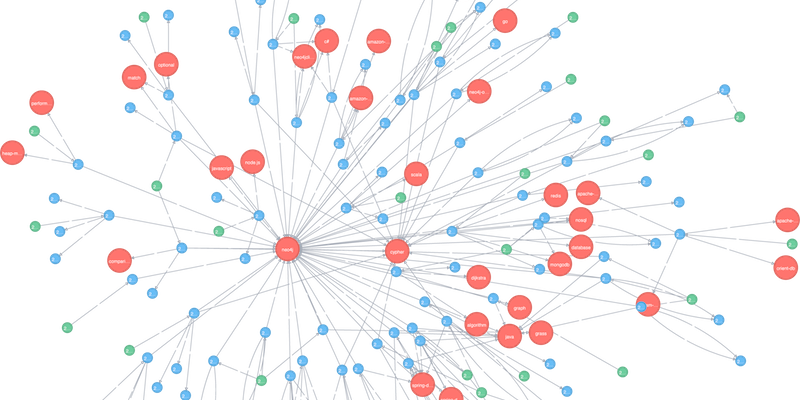

A graph database stores data as nodes and edges natively.

A node is an entity — a user, a transaction, a product

An edge is a relationship — PURCHASED, CONNECTED_TO, REPORTED_BY

Both nodes and edges can carry properties

The key difference: in a relational database, relationships are computed at query time via JOINs. In a graph database, relationships are stored as first-class data. Traversal is a read operation, not a computation.

The same fraud query in Cypher (Neo4j's query language):

MATCH path = (a:Account {id: 'A'})-[:TRANSACTED_WITH*1..3]-(connected:Account) RETURN connected, length(path) as hops ORDER BY hops

Cleaner to write. Faster to execute at scale. And — importantly — easier to reason about, because the query shape mirrors the problem shape.

Where Graph Databases Actually Win

Not every problem needs a graph. But some problems are almost impossible to model cleanly without one.

🔗 Relationship-heavy domains

Use Case

Why Graph Wins

Fraud detection

Multi-hop connection traversal at speed

Recommendation engines

"Users like you also liked..." is a graph query

Knowledge graphs

Entities and their semantic relationships

Access control / permissions

Role hierarchies, inherited permissions

Supply chain analysis

Dependency chains, risk propagation

Social networks

Follows, mutual connections, influence mapping

The common thread: the relationships between entities carry meaning, and you need to query across those relationships at depth.

A Real Example — GST Reconciliation

GST reconciliation in India sounds like a spreadsheet problem. On the surface it is: match invoices, find discrepancies, flag mismatches.

But the interesting questions are relational:

Which vendors consistently file late and how does that propagate risk to their buyers?

Are there vendor clusters where a single bad actor affects a network of downstream filers?

What's the trust score of a vendor based on their transaction history and their counterparties' histories?

These are graph questions. Forcing them into SQL means recursive CTEs, self-joins, and query plans that hurt to look at.

Modeling the same data in Neo4j:

(:Vendor)-[:FILED_INVOICE]->(:Invoice)-[:BILLED_TO]->(:Buyer)

(:Vendor)-[:CONNECTED_TO {shared_pan: true}]->(:Vendor)

Suddenly, vendor risk scoring becomes a traversal. Cluster detection becomes a built-in algorithm. The queries read like the problem statement.

The Learning Curve Is Smaller Than You Think

The two things that trip people up:

- Thinking in graphs instead of tables

This is the real shift. You stop asking "what tables do I need?" and start asking "what entities exist, and how do they relate?"

A useful exercise: take any feature you're building and draw it on a whiteboard as nodes and arrows. If that drawing maps naturally to your data model, a graph database probably fits.

- Cypher syntax

Cypher is Neo4j's query language and it's genuinely readable once it clicks:

-- Find all products purchased by users who also purchased product X MATCH (u:User)-[:PURCHASED]->(x:Product {id: 'X'}) MATCH (u)-[:PURCHASED]->(other:Product) WHERE other.id <> 'X' RETURN other.name, count(u) as co_purchasers ORDER BY co_purchasers DESC

The --> arrows in the query literally represent edges in the graph. Once you internalize that, the rest follows naturally.

When to Stick With Postgres

Graph databases are not a default upgrade. Reach for them when you need them, not before.

Stick with Postgres if:

Your data is primarily tabular and your queries are primarily filters and aggregations

You need strong ACID transactions across complex write operations

Your team knows SQL and the onboarding cost of a new query language isn't worth it

Relationships exist but aren't frequently traversed at depth

Consider a graph if:

You're writing recursive CTEs to answer basic product questions

The word "network," "graph," or "connections" appears in your product spec

Relationship depth and directionality carry semantic meaning

You need built-in graph algorithms — shortest path, centrality, community detection

The Bottom Line

Graph databases are a specialized tool. The mistake isn't using them — it's either reaching for them too early, or never reaching for them at all when the problem clearly calls for it.

The signal is simple:

If the interesting questions in your product are about how things connect, not just what things exist — you're modeling a graph. You might as well store one.

Neo4j has a free tier, excellent documentation, and a sandbox environment you can spin up in minutes. The next time you catch yourself writing a four-level JOIN or a recursive CTE to answer what feels like a simple question — it's worth 30 minutes to model the same problem as a graph and see what happens.

You might be surprised how much cleaner the answer gets.