A glitch in Canva’s AI-driven Magic Layers feature has thrust the design platform into controversy after it was found to replace the word “Palestine” with “Ukraine” in user designs. The issue came to light when a user on X (formerly Twitter), @ros_ie9, shared evidence of the error. The problem sparked immediate backlash online, particularly given the political sensitivities tied to the terms, and raised concerns over how artificial intelligence tools handle language moderation. Canva quickly issued a public apology and said it had resolved the issue.

The Role of Canva's AI and the Controversy It Sparked

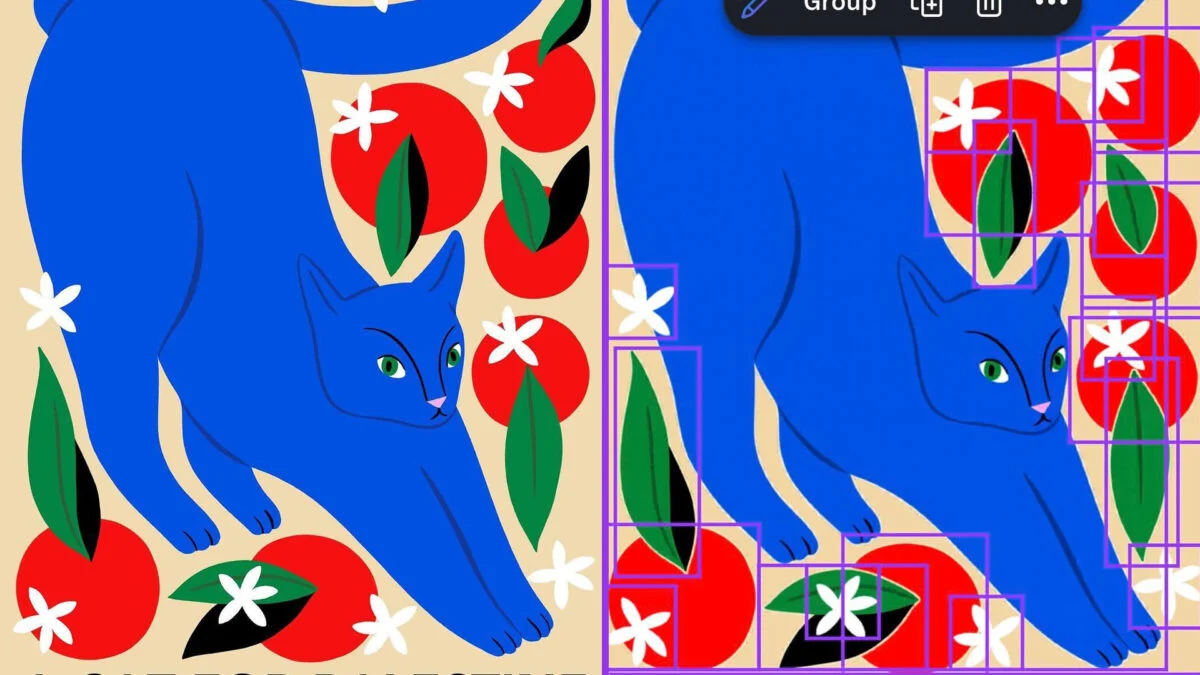

Magic Layers is one of Canva’s newest AI tools, touted as part of a broader push to integrate powerful generative AI features into its design platform. Officially launched earlier this year, Magic Layers is designed to transform flat images into separate, editable components. The tool is not meant to alter visible content in any way but instead empowers users to tweak individual design elements more easily.

However, this core principle was violated when users discovered that typing “Palestine” in a design would accidentally be replaced with “Ukraine” after Magic Layers processed the image. Other politically charged words, such as “Gaza,” reportedly remained unaffected. Intense criticism quickly followed, with social media posts going viral and pointing out the cultural and political sensitivity of such an error.

In response, Canva spokesperson Louisa Green confirmed that the issue was isolated to the word “Palestine” and emphasized that the company acted swiftly to fix it. “We take reports like this very seriously, and we’re putting additional checks in place to help prevent this in the future,” said Green in a statement to The Verge. Green also apologized for “any distress this may have caused.”

A Wobble in Canva’s AI Ambitions

The incident comes at a time when Canva has been positioning itself as a formidable player in the AI-powered design space. Competing with industry heavyweights like Adobe, Canva recently rolled out a major AI overhaul touted as the “next era of creation.” This update brought several features leveraging cutting-edge generative AI, including automatic graphic generation, editable layers, and background removal tools.

While these features have been praised for making complex designs more accessible, the Magic Layers error underscores the risks involved in deploying AI to millions of users. AI systems rely heavily on training data and set moderation rules, making them susceptible to biases or unintentional outputs. In this case, it remains unclear whether the word swap was a result of faulty programming logic, incomplete moderation protocols, or even external tampering with the AI’s model inputs. Regardless of the root cause, this misstep introduces questions about how robust Canva’s quality assurance processes are when it comes to politically sensitive regions or phrases.

The Competitive Context: Why This Matters

Canva’s stumble is particularly significant in the context of its rivalry with Adobe, which in recent years has doubled down on incorporating AI into tools like Photoshop and Illustrator. Adobe Firefly, the company’s generative AI suite, has been widely celebrated for balancing creativity with responsible AI behavior, including embedding safeguards to prevent misuse or offensive outputs. By comparison, Canva’s rapid AI rollout now appears less polished, potentially undermining the credibility of its ambitious vision at a critical time when enterprises and small businesses alike are evaluating these platforms for creative needs.

Moreover, ethical expectations for AI moderation are rising across industries, making incidents like this more significant. As explained by AI ethics expert Dr. Anita Sharma, “AI content tools will be held to the highest standards, especially when they intersect with topics like geopolitics or identity. An error like this can degrade user trust overnight, even if unintentional.” For a platform available in over 190 countries and claiming more than 125 million active users, losing trust would carry major implications for Canva’s future expansion.

User Reaction and Early Fixes

The public reaction to this blunder has been swift and critical, particularly across platforms like X, where many users replicated the issue before Canva was able to patch it. Some described the error as careless, while others called it a reflection of deeper systemic issues in AI moderation and design. Although Canva claims to have resolved the problem, the online uproar indicates that even brief technological missteps can resonate broadly when cultural sensitivities are involved.

Interestingly, several users noted that additional tests with Magic Layers did not yield changes in other words or phrases. This suggests that the problem was limited to a specific set of terms and wasn’t part of a broader pattern of word-swapping behavior. Nonetheless, trust in the feature appears shaken. Creators and freelancers who rely on Canva for professional projects are likely to question whether they can fully depend on AI design features without fearing unintended changes.

Implications for Canva and AI Ethics

Canva’s swift response to the Magic Layers error indicates an awareness of the reputational stakes involved, but this incident serves as a cautionary tale for any company leveraging AI in sensitive or public-facing tools. Expanding the use of AI in creation isn’t just a technical challenge—it’s also an ethical one. Companies must evaluate whether their training data, implementation processes, and quality assurance protocols adequately address the nuances of language, culture, and global politics.

Looking ahead, Canva’s ability to navigate this moment and reinforce user trust will be critical. Enhancing transparency around how its AI models operate, rolling out more rigorous testing procedures, and clarifying moderation policies could be steps in the right direction. Given the broader AI arms race in the creative software market, ensuring robust safeguards might not only repair user relationships but also give Canva a competitive edge. Mistakes happen, but the real question is how those mistakes are addressed—and whether they lead to a smarter, more reliable product in the future.